This algorithm allows you to identify the most popular elements (trending, in other words) in an incoming data stream.

The decaying window algorithm not only tracks the most recurring elements in an incoming data stream, but also discounts any random spikes or spam requests that might have boosted an element’s frequency. In a decaying window, you assign a score or weight to every element of the incoming data stream. Further, you need to calculate the aggregate sum for each distinct element by adding all the weights assigned to that element. The element with the highest total score is listed as trending or the most popular.

- Assign each element with a weight/score.

- Calculate aggregate sum for each distinct element by adding all the weights assigned to that element.

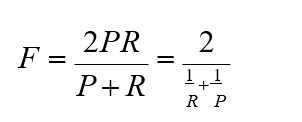

In a decaying window algorithm, you assign more weight to newer elements. For a new element, you first reduce the weight of all the existing elements by a constant factor k and then assign the new element with a specific weight. The aggregate sum of the decaying exponential weights can be calculated using the following formula:

Here, c is usually a small constant of the order or . Whenever a new element, say at+1 , arrives in the data stream you perform the following steps to achieve an updated sum:

1. Multiply the current sum/score by the value (1−c).

2. Add the weight corresponding to the new element.

In a data stream consisting of various elements, you maintain a separate sum for each distinct element. For every incoming element, you multiply the sum of all the existing elements by a value of (1−c). Further, you add the weight of the incoming element to its corresponding aggregate sum.

A threshold can be kept to, ignore elements of weight lesser than that.

Finally, the element with the highest aggregate score is listed as the most popular element.

fifa, ipl, fifa, ipl, ipl, ipl, fifa

Also, let's say each element in sequence has weight of 1.

Let's c be 0.1

The aggregate sum of each tag in the end of above stream will be calculated as below:

Example

For example, consider a sequence of twitter tags below:fifa, ipl, fifa, ipl, ipl, ipl, fifa

Also, let's say each element in sequence has weight of 1.

Let's c be 0.1

The aggregate sum of each tag in the end of above stream will be calculated as below:

fifa

fifa - 1 * (1-0.1) = 0.9

ipl - 0.9 * (1-0.1) + 0 = 0.81 (adding 0 because current tag is different than fifa)

fifa - 0.81 * (1-0.1) + 1 = 1.729 (adding 1 because current tag is fifa only)

ipl - 1.729 * (1-0.1) + 0 = 1.5561

ipl - 1.5561 * (1-0.1) + 0 = 1.4005

ipl - 1.4005 * (1-0.1) + 0 = 1.2605

fifa - 1.2605 * (1-0.1) + 1 = 2.135

fifa - 1.2605 * (1-0.1) + 1 = 2.135

ipl

fifa - 0 * (1-0.1) = 0

ipl - 0 * (1-0.1) + 1 = 1

fifa - 1 * (1-0.1) + 0 = 0.9 (adding 0 because current tag is different than ipl)

ipl - 0.9 * (1-0.01) + 1 = 1.81

ipl - 1.81 * (1-0.01) + 1 = 2.7919

ipl - 2.7919 * (1-0.01) + 1 = 3.764

fifa - 3.764 * (1-0.01) + 0 = 3.7264

In the end of the sequence, we can see the score of fifa is 2.135 but ipl is 3.7264

So, ipl is more trending then fifa

Even though both of them occurred same number of times in input there score is still different.

fifa - 3.764 * (1-0.01) + 0 = 3.7264

In the end of the sequence, we can see the score of fifa is 2.135 but ipl is 3.7264

So, ipl is more trending then fifa

Even though both of them occurred same number of times in input there score is still different.

Advantages of Decaying Window Algorithm:

- Sudden spikes or spam data is taken care.

- New element is given more weight by this mechanism, to achieve right trending output.

Similar Blog : Nitin Decaying Window

All the messages below are just forwarded messages if some one feels hurt about it please add your comments we will remove the post. Host/author is not responsible for these posts.